Oracle launches something of a bare metal cloud that takes advantage of Nvidia’s H100 Tensor Core GPUs and is intended for running heavy duty generative AI and LLM workloads. A more budget minded offering using Nvidia’s L40S GPU will be available early next year.

Oracle Cloud (which Larry Ellison wants you to call Oracle Cloud Infrastructure because that sounds so IBM-ish, AWS-ish, or even Red Hat-ish — you know, almost respectable) wants to cash in on the latest tech craze, which right now is Generative AI and Large Language Models. Currently, all of data tech seems to be playing one giant computer game to determine who can effectively monetize either or both of these different-sides-of-the-same-coin and who will be destroyed by them.

No matter what social benefits we might or might not eventually reap from the bold new world of super AI, for the time being the chief benefit is profit for the tech brokers.

Ellison, of course, wants some of that profit, because Ellison’s gotta Ellison, and being the richest dude in California (when Musk isn’t in town) is sometimes not enough, so he’s going all in with the chip makers, because he can see that the GPU dudes and dudettes are already making big AI bucks. This has him betting the farm by buying warehouses (measured in data centers) of Nvidia GPUs.

So, What Does Oracle Plan to Do With Nvidia’s Chips?

All kidding aside, on Tuesday at Oracle Cloud World in San Francisco, and simultaneously in a blog written by Dave Salvator, Nvidia’s director of product marketing for accelerated computing products, it was announced that tons of GPUs are available for you to use in something of a bare metal sense on Oracle’s cloud.

“With generative AI and large language models driving groundbreaking innovations, the computational demands for training and inference are skyrocketing,” Salvator said. “These modern-day generative AI applications demand full-stack accelerated compute, starting with state-of-the-art infrastructure that can handle massive workloads with speed and accuracy. To help meet this need, Oracle Cloud Infrastructure today announced general availability of Nvidia H100 Tensor Core GPUs on OCI Compute, with Nvidia L40S GPUs coming soon.”

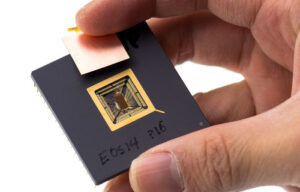

The H100 Tensor Core GPUs PUs that are already available on Oracle’s cloud are definitely impressive. Powered by the Nvidia Hopper architecture, these were specifically designed for large-scale AI and high performance computing infrastructures, offering more than enough performance, scalability and versatility for about any workload:

“Organizations using Nvidia H100 GPUs obtain up to a 30x increase in AI inference performance and a 4x boost in AI training compared with tapping the Nvidia A100 Tensor Core GPU. The H100 GPU is designed for resource-intensive computing tasks, including training LLMs and inference while running them.”

[The increases in inference performance and AI training come from figures supplied by Nvidia.]

“The BM.GPU.H100.8 OCI Compute shape includes eight Nvidia H100 GPUs, each with 80GB of HBM2 GPU memory. Between the eight GPUs, 3.6 TB/s of bisectional bandwidth enables each GPU to communicate directly with all seven other GPUs via Nvidia NVSwitch and NVLink 4.0 technology. The shape includes 16 local NVMe drives with a capacity of 3.84TB each and also includes Intel Xeon Platinum 8480+ CPU processors with 112 cores, as well as 2TB of system memory. In a nutshell, this shape is optimized for organizations’ most challenging workloads.”

While the H100 offering is at the high end of Oracle Cloud’s Nvidia offering, an even more powerful offering featuring the same H100 GPU will start being rolled out soon, according to Salvator:

“Depending on timelines and sizes of workloads, OCI Supercluster allows organizations to scale their Nvidia H100 GPU usage from a single node to up to 50,000 H100 GPUs over a high-performance, ultra-low-latency network. This capability will be generally available later this year in the Oracle Cloud London and Chicago regions, with additional locations to follow.”

Coming Soon: Bargain Basement AI

Again, both of these offerings are top shelf and will definitely be priced accordingly. There will be a more affordable option coming early next year, however, for those with smaller- to medium-sized AI workloads, as well as for graphics and video jobs:

“The Nvidia L40S GPU, based on the Nvidia Ada Lovelace architecture, is a universal GPU for the data center, delivering breakthrough multi-workload acceleration for LLM inference and training, visual computing and video applications. The OCI Compute bare-metal instances with Nvidia L40S GPUs will be available for early access later this year, with general availability coming early in 2024.”

While nowhere nearly as impressive as the H100’s gains over Nvidia’s A100 Tensor Core GPUs, the L40S still shows a 20% performance boost for generative AI

workloads and as much as a 70% improvement AI training use.

Want more information? You can find it here, here, here, and here.

Christine Hall has been a journalist since 1971. In 2001, she began writing a weekly consumer computer column and started covering Linux and FOSS in 2002 after making the switch to GNU/Linux. Follow her on Twitter: @BrideOfLinux