When models can audit firmware and legacy binaries at scale, hiding vulnerabilities stops working. Open, patchable code becomes a core security requirement.

About a week ago, someone shared a story with me from Microsoft’s Mark Russinovich that got me thinking. He took a binary listing from a 1986 Compute magazine article he had written called “Better Branching in AppleSoft.” This was 6502 machine language from the Apple IIe, published as raw hex in print, with no source code. He fed it to a frontier AI model, which reconstructed the program logic with labels, comments, and explanations. It identified the programmer’s intent behind code written four decades ago, all from the binary. Then, it ran a security audit and caught a subtle bug where a routine failed to check a carry flag after a line search.

This is the kind of thing that makes you set your coffee down and think for a minute.

Reverse engineering tools have existed for a long time. Disassemblers, decompilers, and binary analysis frameworks are well-established parts of the security toolkit. What changed is how casually this capability now exists. Anyone with access to a frontier model can point it at a compiled binary and ask what it does. No specialized tooling. No deep expertise in the target architecture. A prompt and a few minutes.

The implications cascade. If a model can understand obscure 1980s architectures this fluently, then the effective attack surface now includes every compiled binary ever shipped: firmware, drivers, embedded systems, old industrial controllers. Systems that have been quietly running for twenty years because auditing them was slow and expensive are suddenly legible.

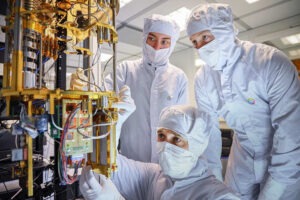

Companies like Binarly are way ahead of the curve in this regard, and they already are applying AI to binary analysis for the purpose of vulnerability discovery and firmware security assessment. This is a real, growing field that sits at the intersection of AI capability and open source security infrastructure. The ability to scan firmware and compiled binaries at scale, looking for known vulnerability patterns, represents a genuine defensive advantage, one that becomes most powerful when paired with open source codebases that can be patched and redeployed in response, without waiting for proprietary gatekeepers.

I think about this the same way I think about genomics, which is where my career began. We can sequence the entire genome of an organism. We can recognize patterns within it. We can distinguish a monkey genome from a human genome by identifying common differences in small pattern snippets. What we cannot do is build an emulator that shows us what the individual will look like based on the chromosomes alone. We see the code, but the full context, the expression, the emergent behavior, remains elusive.

Binaries are infinitely simpler than genomes, but the metaphor holds. Today’s AI models are extraordinarily good at pattern matching and at reasoning through higher-level logic. They are less reliable at the precise mathematical operations that underpin compiled code. They can read the gist. They can surface intent. They can flag anomalies. Fully reconstructing a complex codebase from its compiled output, with all the architectural decisions, conditionals, and contextual knowledge that shaped the original source, remains beyond reach.

But consider this scenario: an AI agent can now scan thousands of compiled binaries in an afternoon, surfacing potential vulnerabilities in firmware, proprietary applications, and legacy systems. Attackers gain a powerful new reconnaissance tool. Defenders, meanwhile, can use the same capability to audit their own software. But only defenders who have access to source code can act on what they find. Organizations running proprietary binaries are left knowing about vulnerabilities they cannot fix. The discovery scales, but the remediation does not.

This is the core of why source code availability becomes increasingly more critical as AI-powered binary analysis matures. When binaries were effectively opaque, security through obscurity had at least a practical, if philosophically unsatisfying, foundation.

That foundation is eroding. In a world where AI can read everything ever compiled, the organizations that will weather the storm are the ones with access to source, the tooling to patch, and the community infrastructure to respond at speed.

This is an extension of the scenario that motivated us to create OpenELA, a community-driven effort to ensure that the Enterprise Linux source code stays open and available. At the time, the argument was grounded in principles of transparency, user freedom, and the practical need for downstream distributions to exist. Those arguments remain valid. AI-powered binary analysis adds a new and urgent dimension: in a threat landscape where compiled code is no longer opaque, source access is the prerequisite for defense. Without it, organizations can see the problem but the fix is inaccessible.

There is another thread worth pulling here. One of my friends had been holding onto an old proprietary binary for an application that no longer existed. It was the best tool he had ever used for that purpose, so he kept the binary alive across years of system upgrades. Recently, in a few prompts, he was able to create a new software project that replicated the core functionality of that mind-mapping tool and even built an interface to import the proprietary binary file format.

This kind of AI-powered reimplementation is becoming routine. And it raises a question that the open source community should take seriously: if the functionality of any given piece of software can be recreated from a description of its behavior, what remains as the durable source of value?

I believe the answer is everything that surrounds the code: ecosystem, certifications, compliance infrastructure, and supply chain integrity. The community that maintains, tests, and secures the software over time becomes the moat for every ounce of differentiation built further up the stack.

Code itself is becoming more fluid, more disposable, more easily regenerated. The trust chain that validates and supports that code is not. Community driven open source provides something that a prompted reimplementation cannot: provenance, accountability, and continuity.

The security implications cut in a more constructive direction as well, as the earlier reference to Binarly shows.

The question is worth sitting with: are we updating our threat models for a world where AI can read everything ever compiled, or are we still deluded to think that binaries are opaque? For much of the industry, the answer is the latter. The assumption that compiled code is difficult to analyze still underpins a great deal of security thinking, procurement policy, and software licensing strategy.

Sure, this is somewhat about futures, as the ability to do this at the scale of Enterprise Linux remains on the horizon. But AI has taught us that what works poorly today will work pretty well in six months and will amaze us in a year. The open source community has an opportunity to lead the response to this new reality. We have the transparency, collaborative infrastructure, and culture of public auditing and rapid patching. What we need is the recognition, across the industry, that source code availability is a security imperative for the AI era.

Guest writer Gregory Kurtzer, CEO and founder of CIQ, is an open source pioneer who began building scientific computing infrastructure at Lawrence Berkeley National Laboratory, where he created the Warewulf cluster management toolkit and co-founded CentOS. He went on to create Singularity and later founded CIQ to develop Fuzzball, a cloud-native orchestration platform designed for modern AI and scientific research. He later founded Rocky Linux and helped create the Open Enterprise Linux Association.

Be First to Comment